If You’re A Linguist, Dont’ Sweat The Formal Math (Too Much) in Natural Language Processing

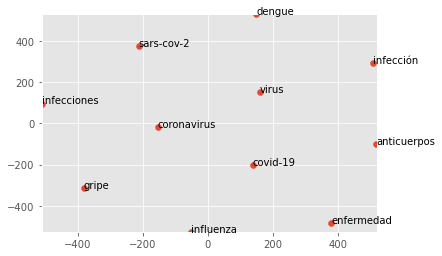

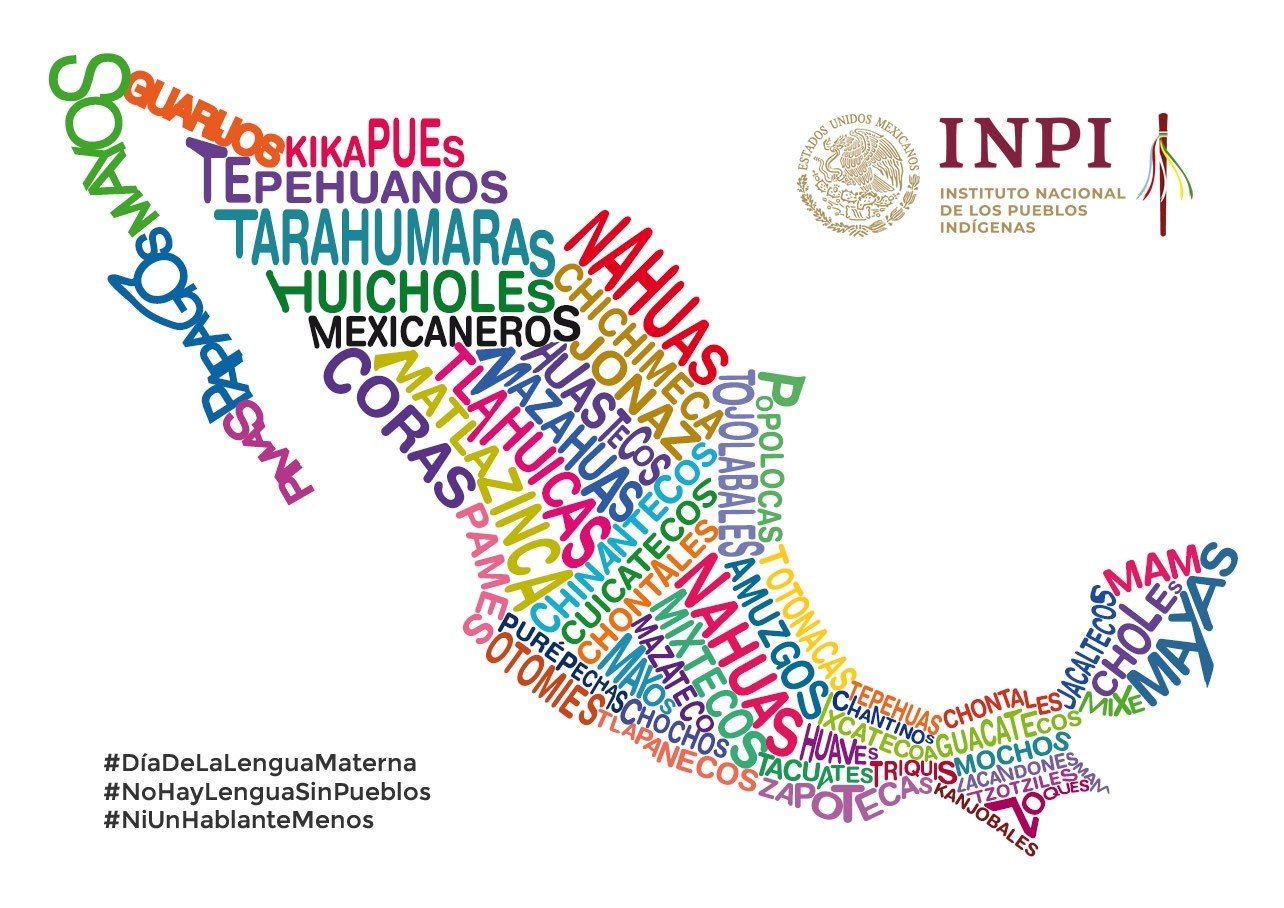

In the realm of Natural Language Processing (NLP), a foundational understanding of linear algebra does help (somewhat) when trying to make money with NLP. Fundamentally, the concern for all us Philosophers, Linguists and Psychologists is to earn a decent living while not being penalized for our lack of immediate gratification in the workforce. The overarching […]